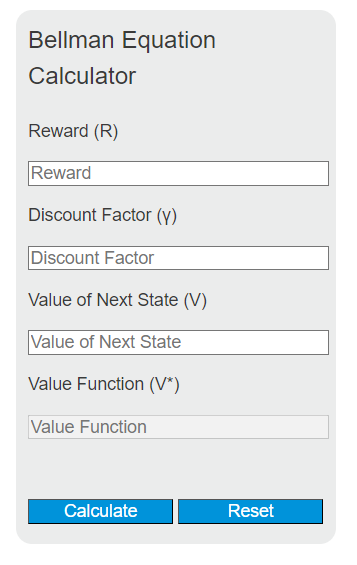

Calculate the missing value in the Bellman equation from reward, discount factor, and next state value to get the updated state value.

Related Calculators

- Exp Calculator

- Decay Factor Calculator

- Average Rate Calculator

- Arden’s Theorem Calculator

- All Math and Numbers Calculators

Bellman Equation Formula

The Bellman equations relate the value of a state to the expected immediate reward plus the discounted value of successor states. For a Markov Decision Process (MDP), common forms are shown below. (The calculator on this page uses the simplified deterministic one-step form V(s)=R+γV(s’).)

\begin{aligned}

V^\pi(s) &= \sum_a \pi(a\mid s)\sum_{s'} P(s'\mid s,a)\Big(R(s,a,s')+\gamma V^\pi(s')\Big)\\

V^*(s) &= \max_a \sum_{s'} P(s'\mid s,a)\Big(R(s,a,s')+\gamma V^*(s')\Big)

\end{aligned}Variables:

- Vπ(s) is the state-value function under policy π (expected return starting from state s and following π thereafter)

- V*(s) is the optimal state-value function (expected return when acting optimally)

- π(a|s) is the policy’s probability of taking action a in state s

- P(s’|s,a) is the transition probability to next state s’ after taking action a in state s

- R(s,a,s’) is the immediate reward received on the transition from s to s’ after action a (often written as a random reward inside the expectation)

- γ is the discount factor (typically 0 ≤ γ ≤ 1; often γ < 1 for continuing/infinite-horizon tasks, and γ = 1 is common in episodic/finite-horizon settings)

In the special case where you already know a single next state s’ (deterministic transition, no action choice being modeled), the update reduces to: V(s) = R + γV(s’). The calculator above performs algebra on this simplified one-step form.

What is the Bellman Equation?

The Bellman equations are recursive relationships that are central to dynamic programming and reinforcement learning. They express the value of a state (or state-action pair) in terms of the expected immediate reward and the (discounted) value of subsequent states. The Bellman expectation equation describes the value under a fixed policy, and the Bellman optimality equation characterizes the optimal value function for a Markov Decision Process (MDP).

How to Calculate Value Function using Bellman Equation?

The following steps outline how to calculate a one-step value update using the Bellman Equation.

- First, determine the immediate reward (R) for the transition you are modeling.

- Next, determine the discount factor (γ), typically in the range 0 to 1.

- Then, determine the value of the next state (V(s’)).

- Use the simplified one-step Bellman backup formula: V(s) = R + γ * V(s’).

- Finally, calculate the updated value V(s) for the current state.

- After inserting the variables and calculating the result, check your answer with the calculator above.

Example Problem :

Use the following variables as an example problem to test your knowledge.

Immediate reward (R) = 5

Discount factor (γ) = 0.9

Value of the next state (V(s’)) = 10

Updated value: V(s) = 5 + 0.9 × 10 = 14