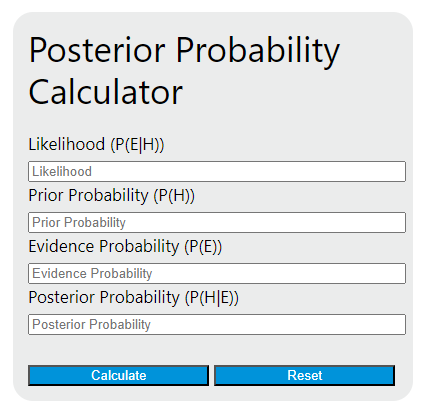

Calculate posterior probability with Bayes' theorem from likelihood, prior, and evidence probability, or solve a missing value.

Related Calculators

- Failure Probability Calculator

- Letter Probability Calculator

- Odds Ratio To Percentage Calculator

- Area to Z Score Calculator

- All Statistics Calculators

Posterior Probability Formula

The posterior probability is the updated probability of a hypothesis after new evidence is observed. This calculator applies Bayes’ theorem to combine three quantities—likelihood, prior probability, and evidence probability—to solve for the missing value.

P(H\mid E) = \frac{P(E\mid H)\cdot P(H)}{P(E)}In plain language, you start with an initial belief about a hypothesis, measure how consistent the evidence is with that hypothesis, and then normalize by the overall probability of seeing that evidence at all.

Variable Definitions

| Term | Notation | Meaning | Valid Range |

|---|---|---|---|

| Posterior probability | P(H\mid E) |

The updated probability that the hypothesis is true after considering the evidence. | Between 0 and 1 |

| Likelihood | P(E\mid H) |

The probability of observing the evidence if the hypothesis is true. | Between 0 and 1 |

| Prior probability | P(H) |

Your belief in the hypothesis before the new evidence is considered. | Between 0 and 1 |

| Evidence probability | P(E) |

The overall probability of the evidence occurring under all relevant possibilities. | Greater than 0 and at most 1 |

Rearranged Forms

Since this calculator can solve for any missing variable when the other three are known, these equivalent forms are useful:

| Solve For | Formula |

|---|---|

| Posterior probability | P(H\mid E) = \frac{P(E\mid H)\cdot P(H)}{P(E)} |

| Likelihood | P(E\mid H) = \frac{P(H\mid E)\cdot P(E)}{P(H)} |

| Prior probability | P(H) = \frac{P(H\mid E)\cdot P(E)}{P(E\mid H)} |

| Evidence probability | P(E) = \frac{P(E\mid H)\cdot P(H)}{P(H\mid E)} |

How to Use the Calculator

- Enter any three known values.

- Make sure each probability is expressed as a decimal between 0 and 1.

- Click calculate to solve for the missing quantity.

- Interpret the result as an updated belief after the evidence is taken into account.

If your data is given as percentages, convert them before entry. For example, 80% should be entered as 0.80.

Example

Suppose the likelihood is 0.8, the prior probability is 0.6, and the evidence probability is 0.5. Substituting those values gives:

P(H\mid E) = \frac{0.8\cdot 0.6}{0.5}P(H\mid E) = 0.96

This means the updated probability of the hypothesis is 0.96, or 96%, after considering the evidence.

How to Interpret the Result

- Higher than the prior: the evidence supports the hypothesis.

- Lower than the prior: the evidence weakens confidence in the hypothesis.

- Close to the prior: the evidence adds little new information.

- Very close to 1: the evidence strongly favors the hypothesis.

- Very close to 0: the evidence strongly argues against the hypothesis.

Important Input Checks

- All probabilities should be between 0 and 1.

- The evidence probability cannot be zero, because division by zero is undefined.

- The final answer should also be between 0 and 1. If it is not, the inputs are not internally consistent.

- Likelihood and evidence probability are not the same thing. Likelihood is conditional on the hypothesis being true, while evidence probability is the overall chance of observing the evidence.

Finding the Evidence Probability

In many real problems, the evidence probability is computed from multiple scenarios rather than estimated directly. For a two-outcome case with a hypothesis and its complement, the evidence probability is:

P(E) = P(E\mid H)\cdot P(H) + P(E\mid \neg H)\cdot P(\neg H)

This version is especially useful in medical testing, classification models, fraud detection, and reliability analysis.

Common Uses of Posterior Probability

- Updating disease risk after a diagnostic test result

- Estimating whether an email is spam after certain keywords appear

- Revising machine learning classifications when new features are observed

- Assessing failure probability after sensor readings or inspection data

- Evaluating competing hypotheses in Bayesian statistics and decision analysis

Common Mistakes

- Entering percentages as whole numbers instead of decimals

- Using the probability of the hypothesis as if it were the probability of the evidence

- Ignoring the base rate contained in the prior probability

- Assuming a large likelihood automatically means a large posterior probability

- Using inputs that produce an impossible posterior above 1 or below 0

Posterior Probability vs. Prior Probability

The prior probability reflects belief before seeing new information. The posterior probability reflects belief after updating with evidence. The larger the gap between the two, the more informative the evidence is.

Frequently Asked Questions

Why can the posterior be much larger than the prior?

If the evidence is much more likely when the hypothesis is true than it is overall, the update can be substantial.

Can the posterior probability ever be exactly equal to the prior?

Yes. That happens when the evidence provides no net update to your belief.

Why is evidence probability in the denominator?

It normalizes the result so the updated probability remains on a valid probability scale.

What does a posterior of 0.96 mean?

It means that after incorporating the evidence, the hypothesis has a 96% probability under the assumptions of the model and the inputs provided.