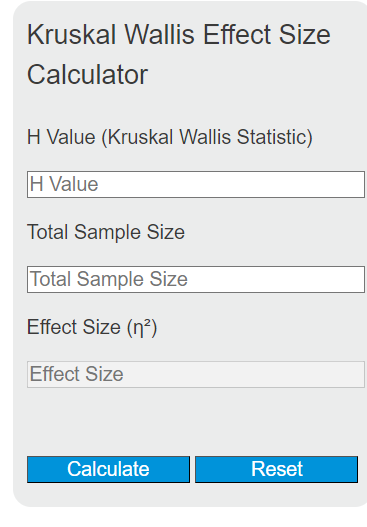

Calculate Kruskal-Wallis effect size η² from the H statistic, total sample size, and number of groups, or find missing H or N values.

Kruskal-Wallis Effect Size Formulas

There are several formulas used to quantify the magnitude of group differences detected by the Kruskal-Wallis H test. Each captures a slightly different aspect of the data, and the best choice depends on your analysis goals and reporting conventions.

Eta-Squared (Simplified)

\eta^2 = \frac{H}{N - 1}This is the most commonly cited formula in introductory statistics resources. H is the Kruskal-Wallis test statistic and N is the total number of observations across all groups. The result ranges from 0 to 1, where higher values indicate a larger proportion of variability in ranks attributable to group membership. This formula does not account for the number of groups being compared.

Eta-Squared (Group-Adjusted)

\eta^2 = \frac{H - k + 1}{N - k}This version adjusts for the number of groups (k) and is the formula used in the R package rstatix. It corrects for the fact that the expected value of H under the null hypothesis is k - 1, not zero. When comparing only two groups, this formula and the simplified version produce similar results, but with three or more groups the group-adjusted formula gives a more accurate estimate of explained rank variance.

Epsilon-Squared

\varepsilon^2 = \frac{H}{(N^2 - 1)/(N + 1)}Epsilon-squared is arguably the most widely recommended effect size for the Kruskal-Wallis test in recent methodological literature. It is mathematically equivalent to eta-squared computed on rank-transformed data using a standard one-way ANOVA. Values range from 0 (no group differences) to 1 (complete separation of groups). It tends to be slightly more conservative than the simplified eta-squared formula, particularly with smaller sample sizes.

Effect Size Interpretation Benchmarks

The table below summarizes the conventional thresholds used to classify effect magnitude. These benchmarks originate from Cohen's guidelines for behavioral sciences and have been adapted for rank-based analyses. Rigid application of cutoffs is discouraged in modern statistical practice; context and domain knowledge should always inform interpretation.

| Classification | Eta-Squared / Epsilon-Squared | Cliff's Delta (|d|) | Vargha-Delaney A |

|---|---|---|---|

| Small | 0.01 to < 0.06 | 0.11 to < 0.28 | 0.56 to < 0.64 |

| Medium | 0.06 to < 0.14 | 0.28 to < 0.43 | 0.64 to < 0.71 |

| Large | 0.14 or greater | 0.43 or greater | 0.71 or greater |

For eta-squared specifically, the value multiplied by 100 gives the approximate percentage of rank variance explained by group membership. An eta-squared of 0.09, for instance, suggests that about 9% of the variability in ranked outcomes is associated with group differences.

Alternative Effect Size Measures

Beyond eta-squared and epsilon-squared, several other effect size statistics are used with the Kruskal-Wallis test, each offering a different interpretive lens.

Freeman's Theta ranges from 0 to 1 and measures the degree to which group membership predicts the rank ordering of observations. A value of 1 means every observation in one group is entirely greater than (or entirely less than) every observation in another group. A value of 0 indicates complete stochastic equality.

Vargha and Delaney's A is a probability-based measure representing the likelihood that a randomly selected observation from one group exceeds a randomly selected observation from another. A value of 0.50 indicates no difference between groups. Because it is expressed as a probability, it is one of the most intuitive effect size measures to communicate to non-technical audiences. For Kruskal-Wallis comparisons involving more than two groups, the maximum pairwise A value across all group pairs is typically reported.

Cliff's Delta is linearly related to Vargha-Delaney A by the equation d = 2A - 1. It ranges from -1 to 1, with 0 representing stochastic equality. Its absolute value is numerically equal to Freeman's theta.

When to Use the Kruskal-Wallis Test

The Kruskal-Wallis H test is the non-parametric counterpart to one-way ANOVA. Choosing between them depends on the characteristics of your data and whether the assumptions of ANOVA are tenable.

Use Kruskal-Wallis when: your dependent variable is measured on an ordinal scale (e.g., Likert ratings, pain severity rankings), your data contain substantial outliers that would distort group means, the distributions are heavily skewed and sample sizes are small enough that the Central Limit Theorem offers limited protection, or normality tests such as Shapiro-Wilk reject the null hypothesis of normality within groups.

Consider staying with ANOVA when: your data are continuous and approximately symmetric, sample sizes per group are at least 15 to 20, or distributions have similar shapes even if they are not perfectly normal. One-way ANOVA is surprisingly robust to moderate departures from normality, and simulation studies show it maintains its nominal Type I error rate across many non-normal distributions when group sizes are roughly equal.

An important and often overlooked assumption of the Kruskal-Wallis test is that the distributions of the groups being compared must have the same shape, even if they differ in location (median). When groups have different variances or different distributional forms, the test does not strictly test for differences in central tendency. In heteroscedastic scenarios, Kruskal-Wallis offers no advantage over ANOVA, and alternatives such as Welch's ANOVA or permutation tests may be more appropriate.

Post-Hoc Testing After a Significant Result

A statistically significant Kruskal-Wallis result tells you that at least one group differs from the others, but it does not identify which specific groups differ. Post-hoc pairwise comparisons are needed to localize the effect.

Dunn's Test is the most widely used post-hoc procedure for the Kruskal-Wallis test. Unlike running multiple Mann-Whitney U tests, Dunn's test preserves the original ranking of all observations across all groups rather than re-ranking within each pair. This maintains consistency with the omnibus test and avoids distortion of the rank structure. P-values from Dunn's test are typically adjusted using the Bonferroni correction or the Benjamini-Hochberg procedure to control the familywise error rate or false discovery rate, respectively.

Conover's Test is an alternative that is more powerful than Dunn's test but also more liberal (higher Type I error rate). It uses the t-distribution with the pooled variance of ranks from the omnibus Kruskal-Wallis test.

Pairwise Mann-Whitney U tests with a Bonferroni or Holm correction are sometimes used, but they re-rank observations within each pair and therefore do not maintain strict consistency with the original Kruskal-Wallis analysis. They remain acceptable when the goal is to compare two specific groups using an independent ranking.

How to Report Kruskal-Wallis Results

When writing up Kruskal-Wallis results for publication, include the test statistic, degrees of freedom, p-value, and an effect size measure. The degrees of freedom for the Kruskal-Wallis test equal k - 1, where k is the number of groups.

A typical report follows this pattern: "A Kruskal-Wallis H test indicated a statistically significant difference in [outcome] across the three conditions, H(2) = 14.32, p = .001, eta-squared = 0.12." If post-hoc comparisons were performed, report those immediately after: "Dunn's post-hoc test with Bonferroni correction revealed that Group A (Mdn = 7.5) scored significantly higher than Group C (Mdn = 4.2), z = 3.41, p = .002, while no other pairwise differences reached significance."

Always specify which effect size formula was used (eta-squared, epsilon-squared, etc.), the correction method applied to post-hoc p-values, and whether medians or mean ranks are being reported as measures of central tendency.

Limitations of Rank-Based Effect Sizes

Interpreting eta-squared or epsilon-squared as "percentage of variance explained" requires caution when applied to rank data. In parametric ANOVA, eta-squared directly reflects the proportion of total variance in the dependent variable accounted for by group membership. In the Kruskal-Wallis context, the "variance" being partitioned is rank variance, which captures relative ordering rather than absolute magnitude of differences. Two datasets with identical rank orderings but vastly different raw-score spreads will produce the same effect size. This means the effect size reflects how well group labels predict rank order, not how large the actual differences in the measured variable are.

Additionally, eta-squared and epsilon-squared are sensitive to the number and size of groups. With many small groups, even moderate rank separation can produce inflated effect sizes. Conversely, with very large samples, trivially small rank differences can be statistically significant while the effect size remains near zero. This is precisely why reporting effect size alongside the p-value is considered essential in contemporary statistical practice.