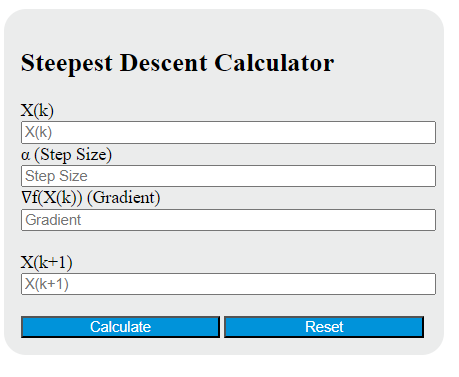

Enter the current point in the sequence, step size, and gradient into the calculator to determine the next point in the sequence using steepest descent.

- The Khamis-Roche Method Calculator

- Scheffe Test Calculator

- Sobel Test Calculator

- All Math and Numbers Calculators

Steepest Descent Formula

The steepest descent calculator performs a single optimization update. It takes the current point, the step size, and the gradient at that point, then returns the next point in the sequence. This is the core update used in iterative minimization methods for reducing a differentiable objective function.

X_{k+1} = X_k - \alpha \nabla f(X_k)In plain terms, the method moves from the current point in the direction opposite the gradient. Because the gradient points toward the fastest local increase, subtracting it moves toward the fastest local decrease for that step.

What Each Input Means

- Current point — your present estimate of the minimizing value.

- Step size — how far to move during the update. This is often called the learning rate.

- Gradient — the slope or derivative information at the current point. Its sign and magnitude determine the direction and size of the correction.

- Next point — the updated estimate after one descent step.

For a one-variable function, the gradient is simply the derivative, so the update is often written as:

x_{k+1} = x_k - \alpha f'(x_k)How the Update Behaves

- If the gradient is positive, the next point moves downward by subtracting a positive quantity.

- If the gradient is negative, the next point moves upward because subtracting a negative quantity adds to the current point.

- If the gradient is zero, the update does not move. That may indicate a minimum, maximum, or saddle point depending on the function.

- If the gradient magnitude is large, the step correction is larger for the same step size.

How to Use the Calculator

- Enter the current point.

- Enter the step size.

- Enter the gradient evaluated at the current point.

- Calculate the next point using the descent update.

- Repeat the process with the new point if you are performing multiple iterations.

This calculator is best understood as a one-step update tool. Full steepest descent is an iterative process, meaning the formula is applied again and again until a stopping condition is satisfied.

Example Calculation

If the current point is 3, the step size is 0.1, and the gradient is 2, then the next point is:

x_{k+1} = 3 - 0.1(2) = 2.8That means the sequence moves from 3 to 2.8 after one descent step. If you continue the process, you would compute the gradient again at 2.8 and apply another update.

Why Step Size Matters

The step size strongly affects performance:

- Too large — the method can overshoot the minimum, oscillate, or even diverge.

- Too small — the method is stable but may converge very slowly.

- Well chosen — the sequence decreases the function efficiently and approaches a minimum more quickly.

The actual change applied at each iteration is the product of the step size and gradient:

\Delta x = -\alpha \nabla f(X_k)

This makes interpretation simple: the gradient decides the direction, and the step size scales the movement.

When Steepest Descent Works Well

- Smooth, differentiable objective functions

- Problems where a local minimum is acceptable

- Moderate gradients and a sensible fixed step size

- Introductory optimization, numerical methods, and machine learning practice problems

Common Pitfalls

- Using the wrong sign — adding the gradient performs ascent, not descent.

- Keeping the same gradient — in iterative optimization, the gradient usually changes after every update.

- Ignoring scaling — poorly scaled problems can make descent zig-zag and slow.

- Expecting one step to solve everything — a single update rarely lands exactly on the minimum.

- Assuming every zero gradient is a minimum — additional analysis may be needed.

Typical Stopping Conditions

When steepest descent is used as a full algorithm, iteration often stops when one of these conditions is met:

\|\nabla f(X_k)\| < \varepsilon

\|X_{k+1} - X_k\| < \varepsilon|f(X_{k+1}) - f(X_k)| < \varepsilonThese conditions check whether the slope is small, the position change is tiny, or the objective value is no longer improving in a meaningful way.

Steepest Descent vs. Gradient Descent

In many introductory contexts, the terms steepest descent and gradient descent are used interchangeably. Both refer to moving opposite the gradient to reduce the objective function. In more advanced optimization settings, “steepest” can depend on the norm used to define direction, but for standard Euclidean problems the update above is the familiar gradient-based minimization step.

Practical Interpretation

If you think of the objective function as a surface, the gradient points uphill. Steepest descent chooses the local downhill direction and takes a step of controlled size. Repeating that process traces a path toward a nearby low point of the surface.

Quick FAQ

Can the next point ever make the function larger?

If the step size is too large, yes. The update formula points in a descent direction, but the chosen step length still matters.

What happens if the gradient is zero?

The next point equals the current point. That may represent convergence, but it can also correspond to a flat region, maximum, or saddle point.

Is this calculator for one iteration or many?

It computes one update at a time. For a full optimization sequence, recalculate the gradient at each new point and repeat.

Can this be used in machine learning?

Yes. The same update structure appears in many training algorithms where a loss function is minimized by repeatedly adjusting parameters opposite the gradient.