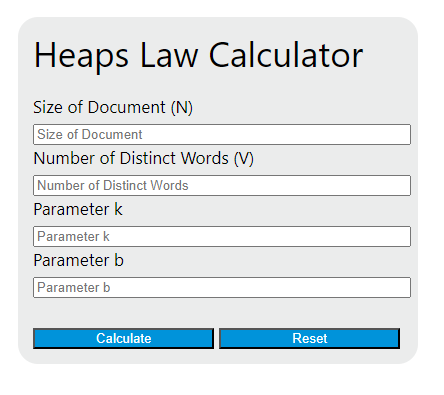

Calculate any missing Heaps' Law value by entering document size, parameter k, parameter b, and distinct word count for vocabulary growth.

Heaps Law Formula

Heaps’ Law models vocabulary growth in a document or corpus. It estimates how many distinct words appear as the total number of words increases. This is useful in linguistics, information retrieval, corpus analysis, and natural language processing when you want a fast estimate of lexical variety from document size.

V = k * N^b

| Variable | Meaning | Practical Interpretation |

|---|---|---|

| V | Number of distinct words | The estimated count of unique word types in the text. |

| N | Document size | The total number of words or tokens in the document or corpus. |

| k | Scale parameter | Shifts the vocabulary curve upward or downward for a given corpus. |

| b | Growth exponent | Controls how quickly new vocabulary appears as the text gets longer. |

Heaps’ Law is a sublinear growth model. That means vocabulary usually grows more slowly than document length.

0 < b < 1

For realistic word counts, the number of distinct words should not exceed the total number of words.

V \le N

How to Use the Heaps Law Calculator

- Enter the total document size as the number of tokens or words.

- Enter the parameter k for the corpus, language, or tokenization method you are using.

- Enter the parameter b to represent the expected rate of vocabulary growth.

- The calculator returns the estimated number of distinct words.

- If your calculator is set up to solve for a missing field, enter any three values to compute the fourth.

The output is only as good as the parameters you use. If the text source, preprocessing rules, or language changes, the values of k and b can change as well.

Rearranged Forms of the Equation

If you know three variables and want to solve for the fourth, these equivalent forms are helpful.

N = (V / k)^(1 / b)

k = V / N^b

b = \ln(V / k) / \ln(N)

These forms require positive inputs. Solving for b also requires that the document size is not equal to 1.

N > 0,\; V > 0,\; k > 0,\; N \ne 1

What the Parameters Tell You

| Parameter Change | Effect on Result | Interpretation |

|---|---|---|

| Larger k | Higher estimated distinct-word count at every document size | The corpus starts with richer vocabulary for the same amount of text. |

| Smaller k | Lower estimated distinct-word count | The corpus is more repetitive or more normalized. |

| Larger b | Faster vocabulary growth as the text expands | New words continue appearing more rapidly. |

| Smaller b | Slower vocabulary growth | The text repeats existing words more often. |

Why Vocabulary Growth Slows Down

At the beginning of a document, many words are new. As the document gets longer, repeated words appear more often, so the rate of discovery of new words falls. Heaps’ Law captures that behavior with the exponent.

A closely related measure is the type-token ratio, which compares distinct words to total words.

TTR = V / N = k * N^(b - 1)

Because the exponent on N is usually negative in this form, lexical diversity measured by type-token ratio often decreases as document length increases. That is why comparing raw type-token ratios across very different document sizes can be misleading.

Example Calculation

| Input | Value |

|---|---|

| Document size | 10,000 |

| k | 50 |

| b | 0.5 |

V = 50 * 10000^0.5 = 50 * 100 = 5000

The model estimates 5,000 distinct words in a 10,000-word document.

The growth rule can also be compared across two document sizes.

V_2 / V_1 = (N_2 / N_1)^b

If document length becomes four times larger and the exponent stays the same, the distinct-word count scales by the square root of four when the exponent is 0.5.

V_2 / V_1 = 4^0.5 = 2

So quadrupling the text roughly doubles the vocabulary under that setting.

Estimating k and b from Real Text

If you have sample documents from the same domain, you can estimate the parameters by measuring document size and distinct-word count across multiple samples, then fitting a straight line on a log scale.

\ln(V) = \ln(k) + b * \ln(N)

In that linearized form, the slope gives b and the intercept gives ln(k). This is helpful when building corpus-specific models rather than relying on generic parameter choices.

Common Applications

- Estimating vocabulary size in a book, article collection, or dataset

- Planning memory or index size in search and text-mining systems

- Comparing lexical growth across genres, authors, or languages

- Evaluating preprocessing choices such as stemming, lemmatization, or case normalization

- Studying how quickly new terms appear in growing corpora

Important Limits and Assumptions

- Heaps’ Law is empirical, not exact. It is a fitted approximation.

- Results depend heavily on tokenization rules, punctuation handling, case sensitivity, and normalization.

- The same values of k and b may not transfer well across very different domains.

- Very short texts often produce unstable estimates because vocabulary growth is noisy at small sample sizes.

- The model summarizes average behavior and may not capture abrupt topic shifts or mixed-language content.

Practical Interpretation of the Result

Use the calculator output as an estimate of expected vocabulary richness for a text of a given size. A larger predicted value suggests broader lexical variety, while a smaller predicted value suggests more repetition or stronger normalization. For best results, keep the document type, language, and preprocessing rules consistent when comparing outputs.