Calculate accuracy, true positives, true negatives, or total samples from the other three values in a simple classification accuracy calculator.

Related Calculators

- Relative Accuracy Calculator

- Average Error Calculator

- Systematic Error Calculator

- Percent Deviation Calculator

- All Statistics Calculators

Accuracy Formula

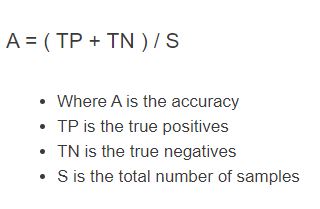

The following formula is used to calculate the classification accuracy of a test.

A(\%) = \frac{TP + TN}{S} \times 100- Where A is the accuracy (percent)

- TP is the true positives

- TN is the true negatives

- S is the total number of samples (TP + TN + false positives + false negatives)

To calculate the accuracy of a test, sum the true positives and true negatives, then divide by the total number of samples. If you want a percent, multiply the result by 100.

Accuracy Definition

Accuracy is the proportion of all results that are correct. In a classification setting, it is the number of correct classifications (true positives + true negatives) divided by the total number of samples.

Accuracy Example

How to calculate accuracy?

- First, determine the number of true positives.

Add up the total number of true positives produced by the test.

- Next, determine the number of true negatives.

Add up the total number of true negatives produced.

- Finally, calculate the accuracy.

Calculate the accuracy by summing the true negatives and positives and then dividing by the total number of samples (total number of tests performed)

FAQ

Accuracy is the percentage of all cases a test or classifier gets correct. For example, if a test produces 40 true positives and 50 true negatives out of 100 total samples, then accuracy = (40 + 50) / 100 = 0.90 = 90%.

Accuracy is used across many fields to summarize how often a classifier or diagnostic test is correct (for example, in medical testing, machine learning, and quality control).